AV1 Bitstream & Decoding Process Specification

Last modified: 2023-05-25 12:27 PT

Authors

Peter de Rivaz, Argon Design Ltd

Jack Haughton, Argon Design Ltd

Codec Working Group Chair

Adrian Grange, Google LLC

Document Design

Lou Quillio, Google LLC

Notice

This is an internal AOMedia working document and not an approved version of the AV1 specification. The approved AV1 bitstream specification can be found here: https://aomediacodec.github.io/av1-spec/av1-spec.pdf

Abstract

This document defines the bitstream formats and decoding process for the Alliance for Open Media AV1 video codec.

Contents

- Scope

- Terms and definitions

- Symbols and abbreviated terms

- Conventions

- Syntax structures

- General

- Low overhead bitstream format

- OBU syntax

- Reserved OBU syntax

- Sequence header OBU syntax

- Temporal delimiter obu syntax

- Padding OBU syntax

- Metadata OBU syntax

- Frame header OBU syntax

- General frame header OBU syntax

- Uncompressed header syntax

- Get relative distance function

- Reference frame marking function

- Frame size syntax

- Render size syntax

- Frame size with refs syntax

- Superres params syntax

- Compute image size function

- Interpolation filter syntax

- Loop filter params syntax

- Quantization params syntax

- Delta quantizer syntax

- Segmentation params syntax

- Tile info syntax

- Tile size calculation function

- Quantizer index delta parameters syntax

- Loop filter delta parameters syntax

- CDEF params syntax

- Loop restoration params syntax

- TX mode syntax

- Skip mode params syntax

- Frame reference mode syntax

- Global motion params syntax

- Global param syntax

- Decode signed subexp with ref syntax

- Decode unsigned subexp with ref syntax

- Decode subexp syntax

- Inverse recenter function

- Film grain params syntax

- Temporal point info syntax

- Frame OBU syntax

- Tile group OBU syntax

- General tile group OBU syntax

- Decode tile syntax

- Clear block decoded flags function

- Decode partition syntax

- Decode block syntax

- Mode info syntax

- Intra frame mode info syntax

- Intra segment ID syntax

- Read segment ID syntax

- Skip mode syntax

- Skip syntax

- Quantizer index delta syntax

- Loop filter delta syntax

- Segmentation feature active function

- TX size syntax

- Block TX size syntax

- Var TX size syntax

- Inter frame mode info syntax

- Inter segment ID syntax

- Is inter syntax

- Get segment ID function

- Intra block mode info syntax

- Inter block mode info syntax

- Filter intra mode info syntax

- Ref frames syntax

- Assign MV syntax

- Read motion mode syntax

- Read inter intra syntax

- Read compound type syntax

- Get mode function

- MV syntax

- MV component syntax

- Compute prediction syntax

- Residual syntax

- Transform block syntax

- Transform tree syntax

- Get TX size function

- Get plane residual size function

- Coefficients syntax

- Compute transform type function

- Get scan function

- Intra angle info luma syntax

- Intra angle info chroma syntax

- Is directional mode function

- Read CFL alphas syntax

- Palette mode info syntax

- Transform type syntax

- Get transform set function

- Palette tokens syntax

- Palette color context function

- Is inside function

- Is inside filter region function

- Clamp MV row function

- Clamp MV col function

- Clear CDEF function

- Read CDEF syntax

- Read loop restoration syntax

- Read loop restoration unit syntax

- Tile list OBU syntax

- Syntax structures semantics

- General

- OBU semantics

- Reserved OBU semantics

- Sequence header OBU semantics

- Temporal delimiter OBU semantics

- Padding OBU semantics

- Metadata OBU semantics

- General metadata OBU semantics

- Metadata ITUT T35 semantics

- Metadata high dynamic range content light level semantics

- Metadata high dynamic range mastering display color volume semantics

- Metadata scalability semantics

- Scalability structure semantics

- General

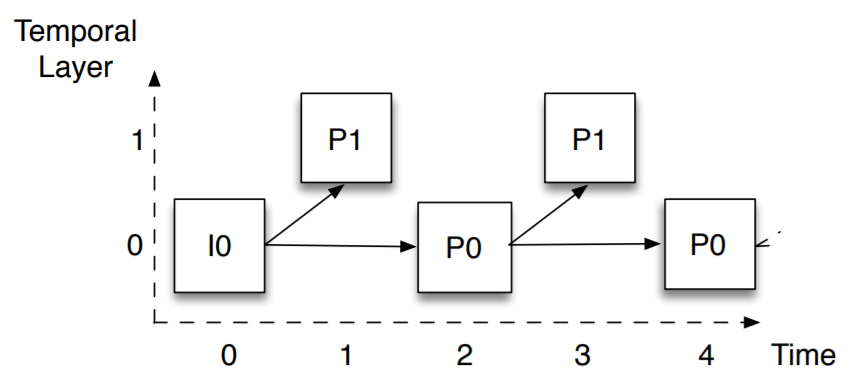

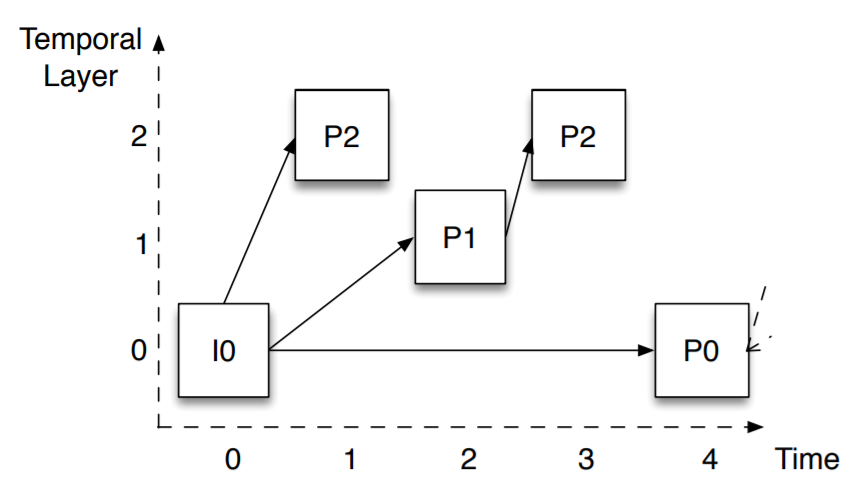

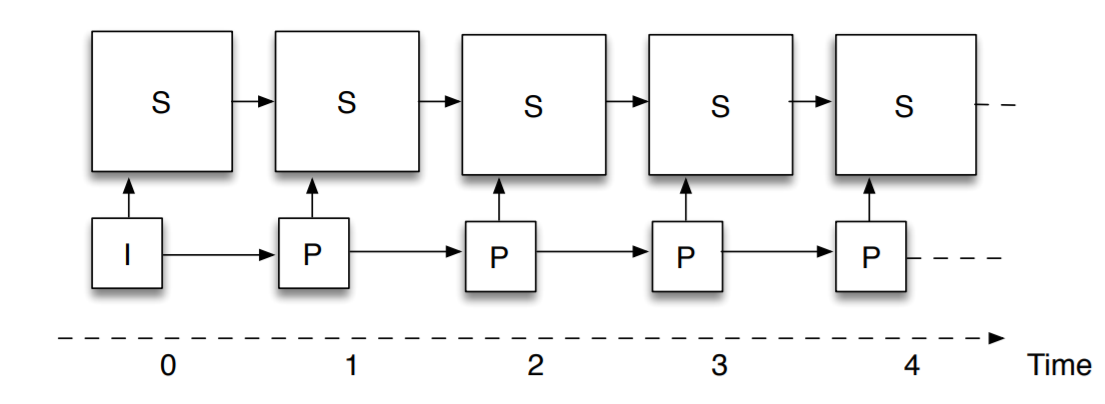

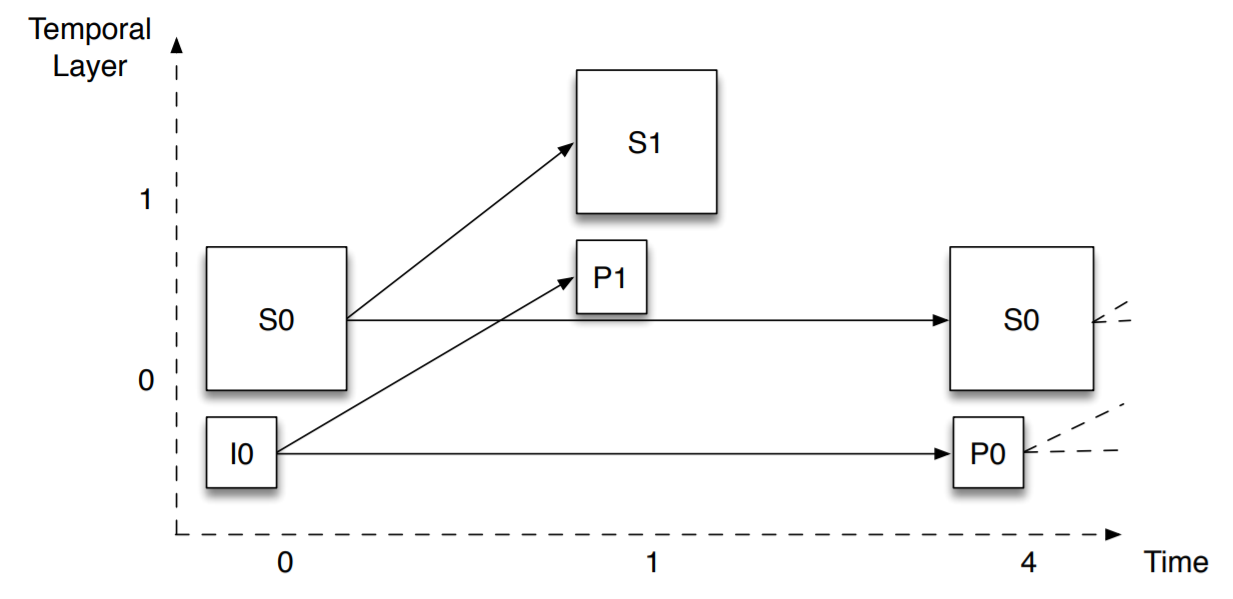

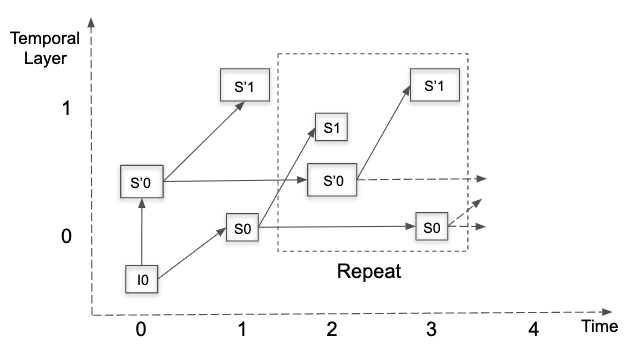

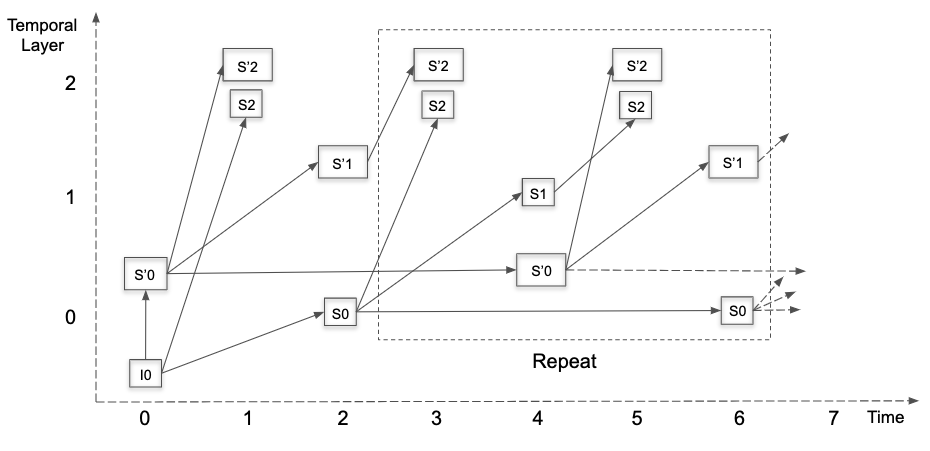

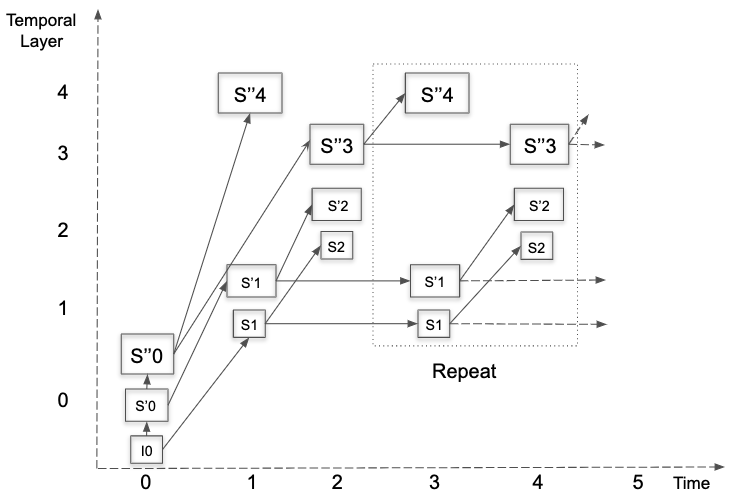

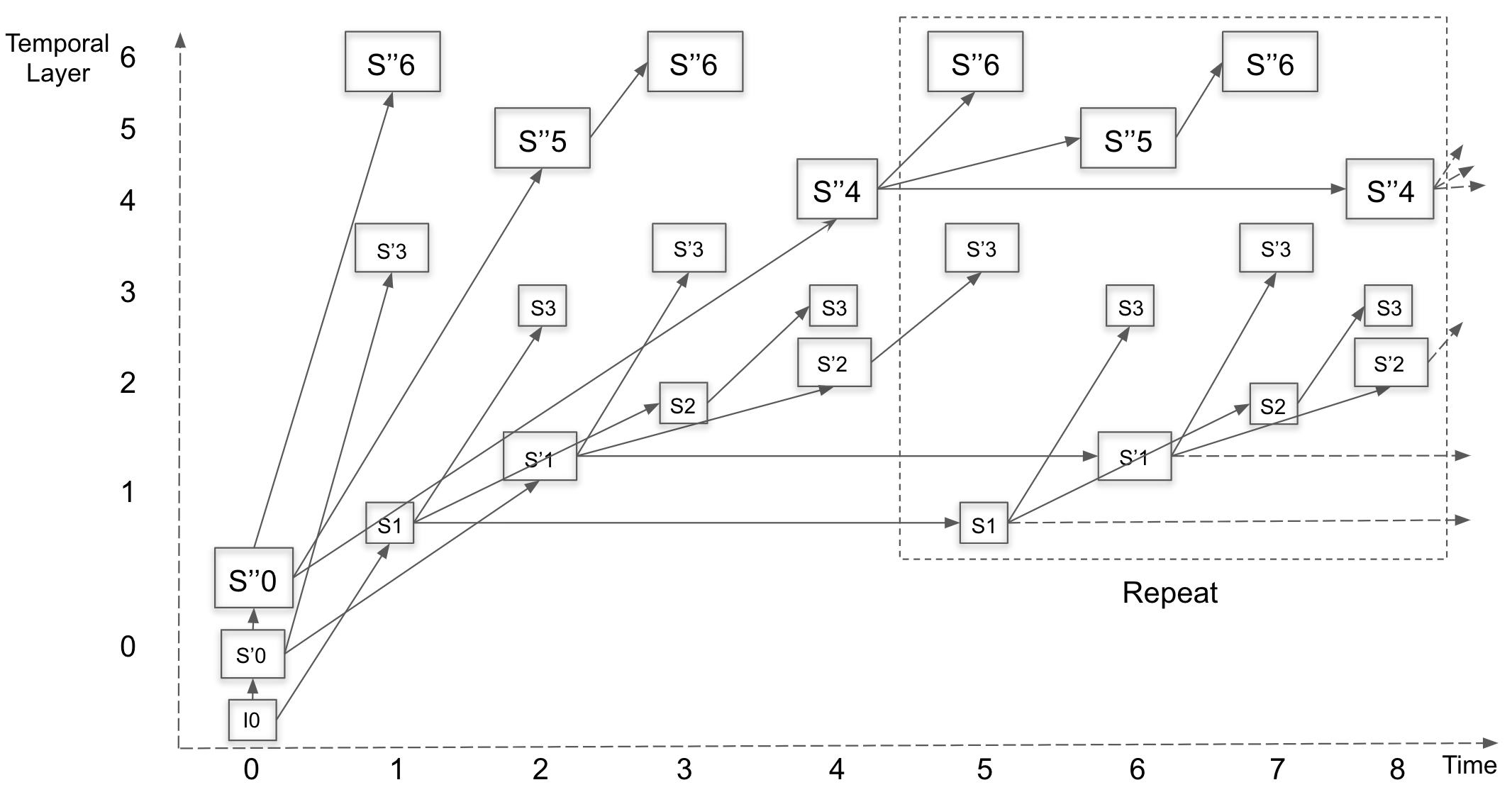

- L1T2 (Informative)

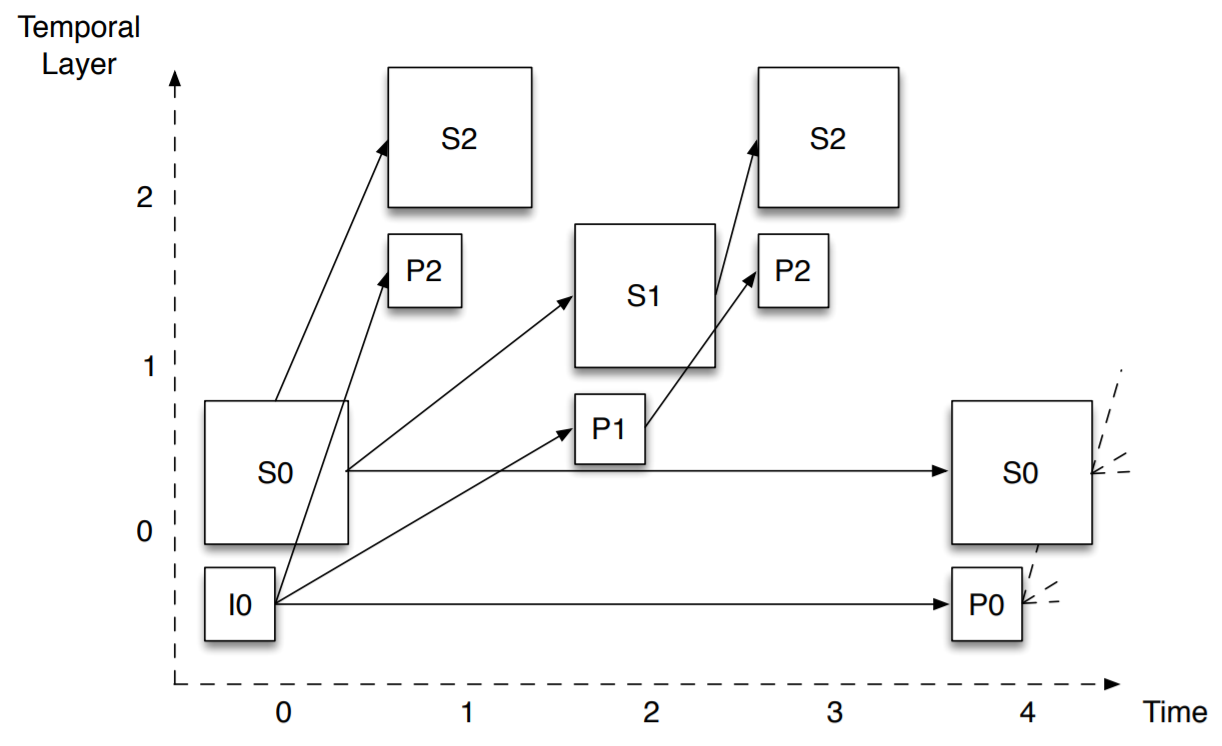

- L1T3 (Informative)

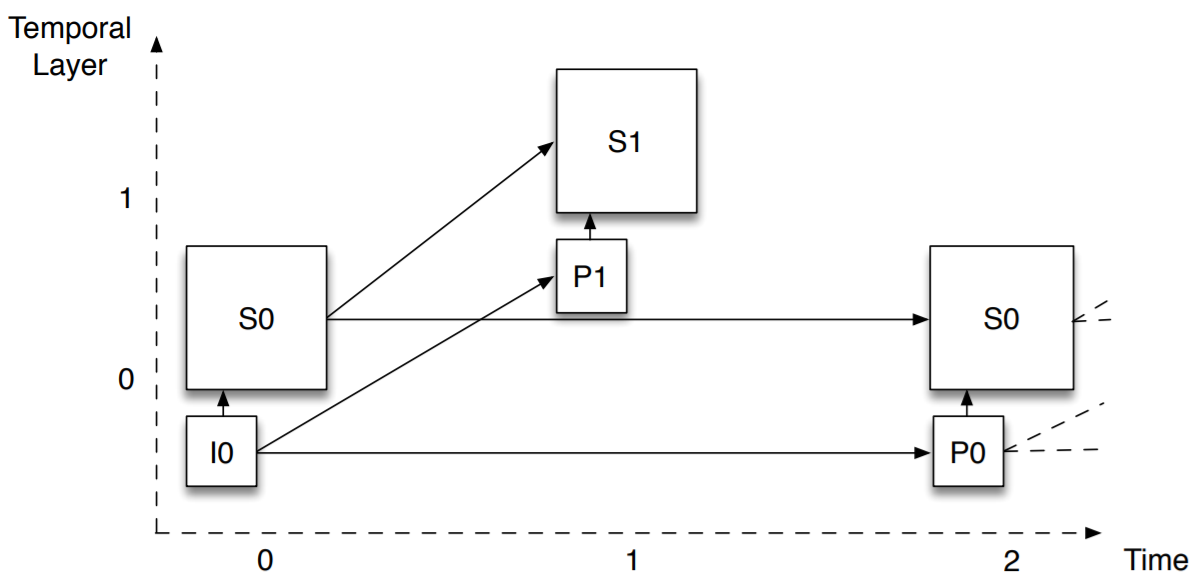

- L2T1 / L2T1h (Informative)

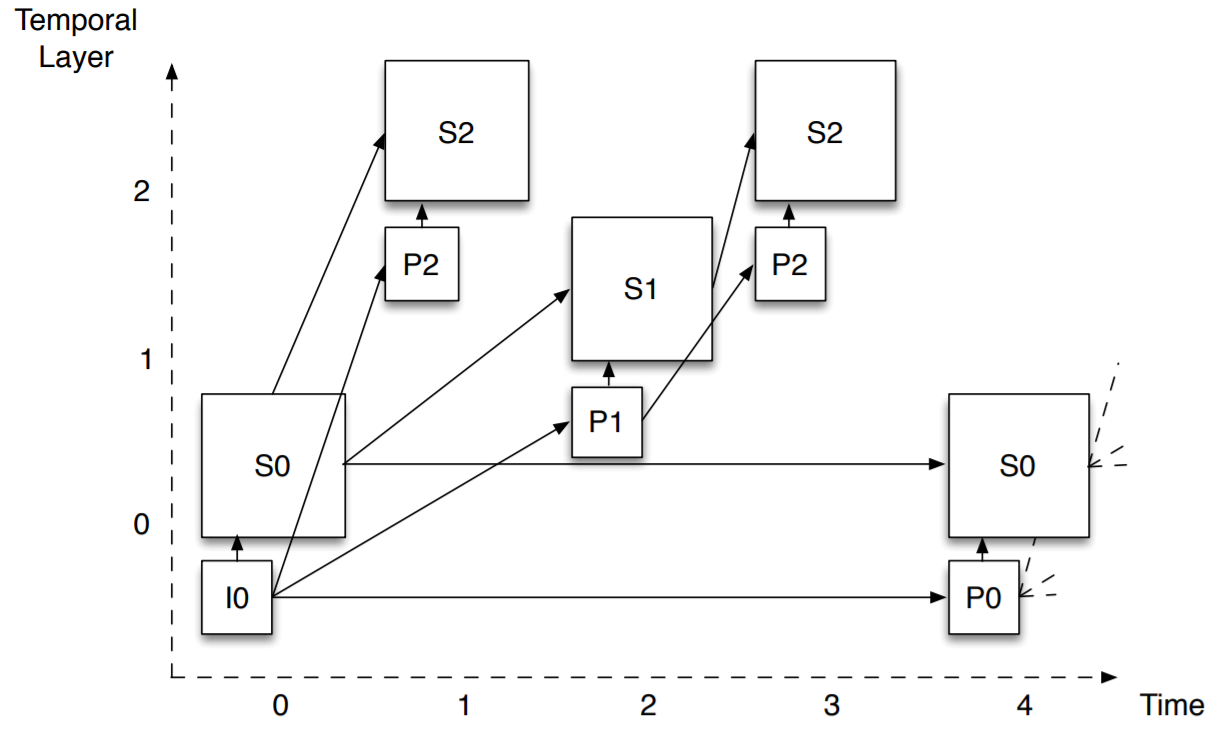

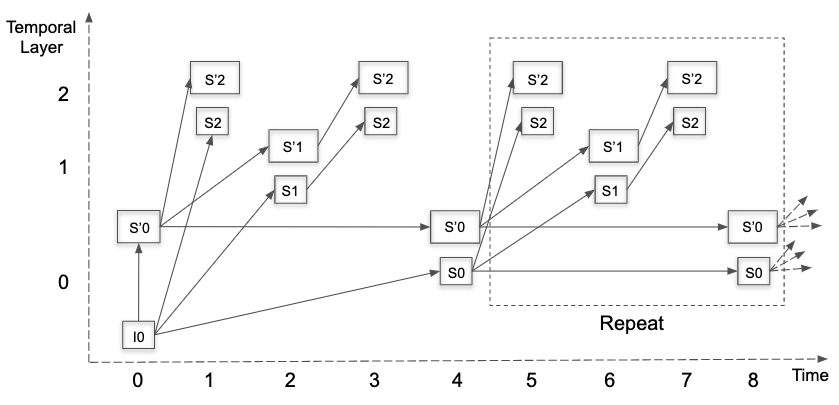

- L2T2 / L2T2h (Informative)

- L2T3 / L2T3h (Informative)

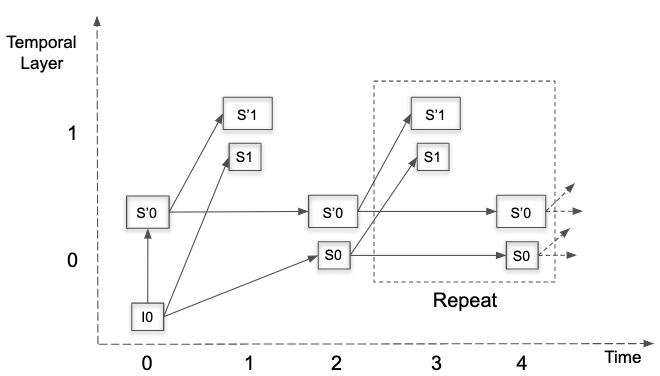

- S2T1 / S2T1h (Informative)

- S2T2 / S2T2h (Informative)

- S2T3 / S2T3h (Informative)

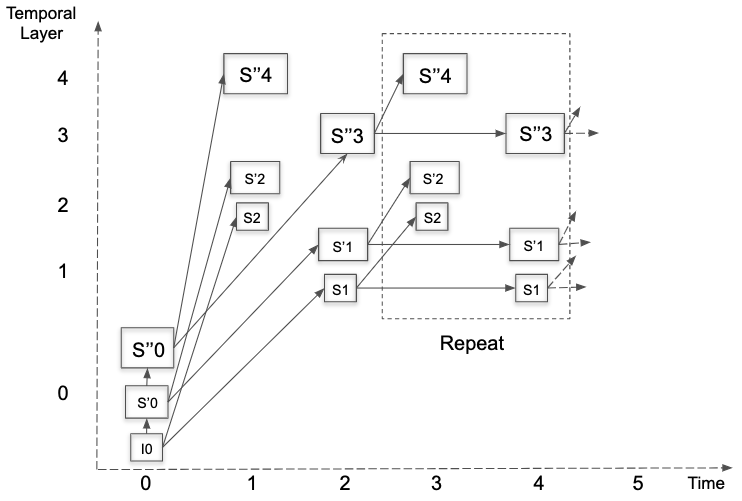

- L3T1 (Informative)

- L3T2 (Informative)

- L3T3 (Informative)

- S3T1 (Informative)

- S3T2 (Informative)

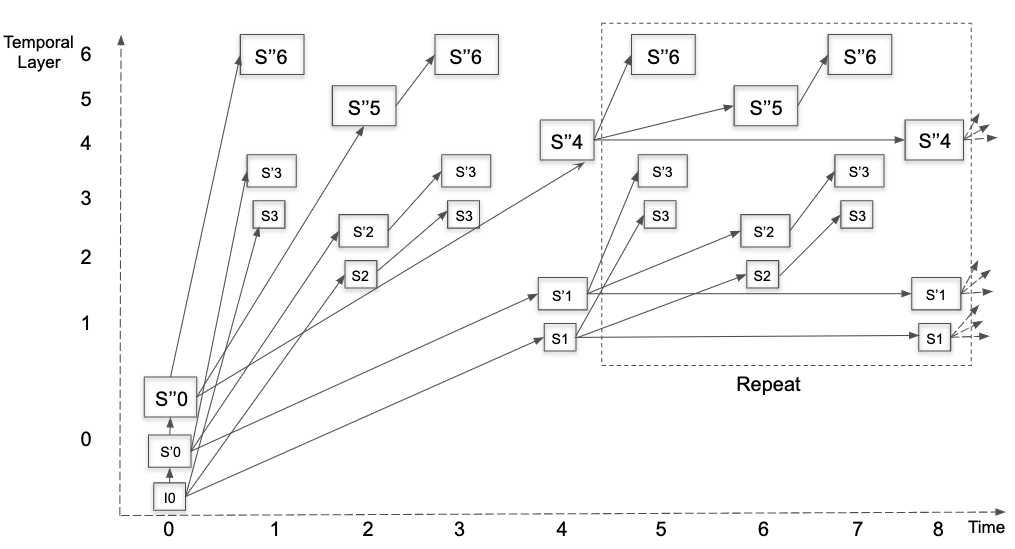

- S3T3 (Informative)

- Metadata timecode semantics

- Frame header OBU semantics

- General frame header OBU semantics

- Uncompressed header semantics

- Reference frame marking semantics

- Frame size semantics

- Render size semantics

- Frame size with refs semantics

- Superres params semantics

- Compute image size semantics

- Interpolation filter semantics

- Loop filter semantics

- Quantization params semantics

- Delta quantizer semantics

- Segmentation params semantics

- Tile info semantics

- Quantizer index delta parameters semantics

- Loop filter delta parameters semantics

- Global motion params semantics

- Global param semantics

- Decode subexp semantics

- Film grain params semantics

- TX mode semantics

- Skip mode params semantics

- Frame reference mode semantics

- Temporal point info semantics

- Frame OBU semantics

- Tile group OBU semantics

- General tile group OBU semantics

- Decode tile semantics

- Clear block decoded flags semantics

- Decode partition semantics

- Decode block semantics

- Intra frame mode info semantics

- Intra segment ID semantics

- Read segment ID semantics

- Inter segment ID semantics

- Skip mode semantics

- Skip semantics

- Quantizer index delta semantics

- Loop filter delta semantics

- CDEF params semantics

- Loop restoration params semantics

- TX size semantics

- Block TX size semantics

- Var TX size semantics

- Transform type semantics

- Is inter semantics

- Intra block mode info semantics

- Inter block mode info semantics

- Filter intra mode info semantics

- Ref frames semantics

- Assign mv semantics

- Read motion mode semantics

- Read inter intra semantics

- Read compound type semantics

- MV semantics

- MV component semantics

- Compute prediction semantics

- Residual semantics

- Transform block semantics

- Coefficients semantics

- Intra angle info semantics

- Read CFL alphas semantics

- Palette mode info semantics

- Palette tokens semantics

- Palette color context semantics

- Read CDEF semantics

- Read loop restoration unit semantics

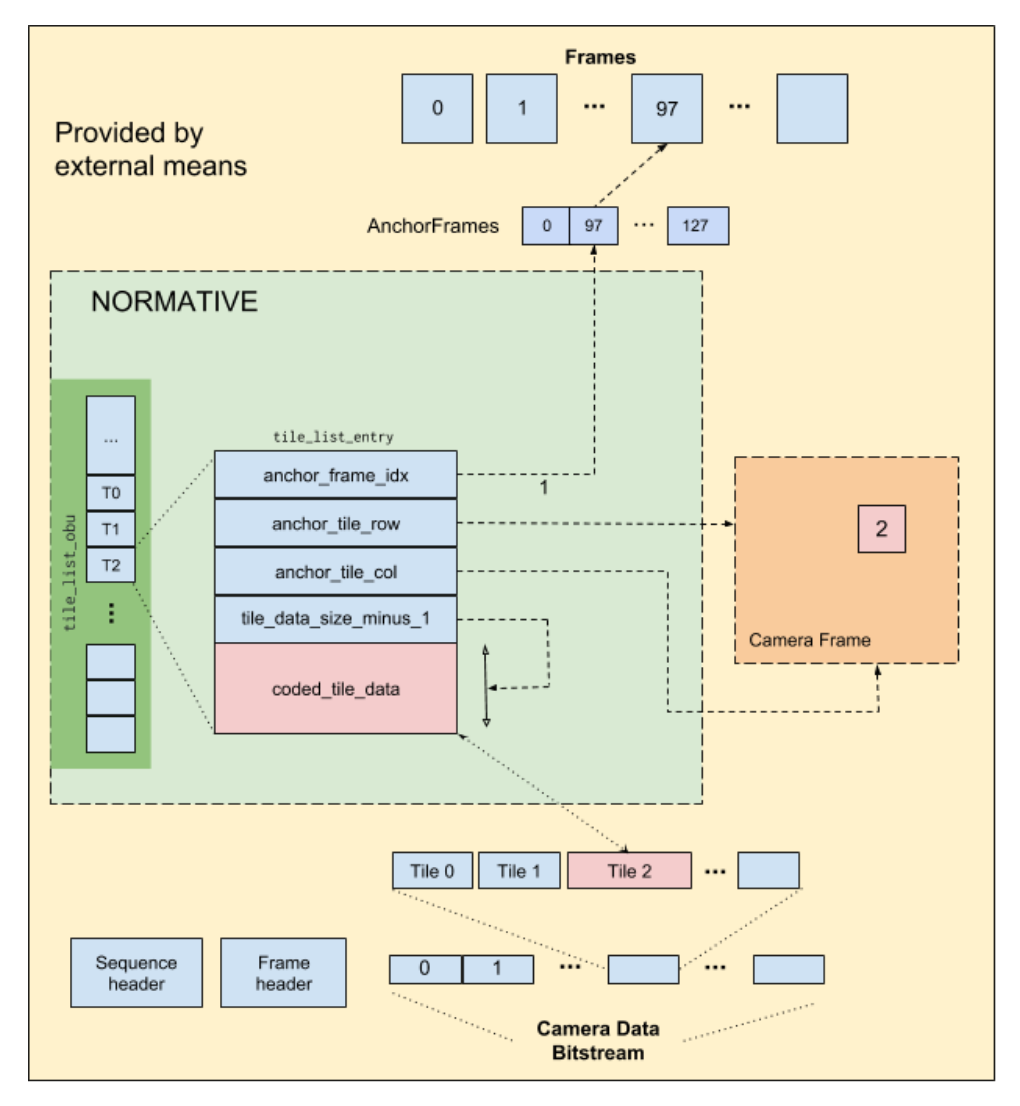

- Tile list OBU semantics

- Decoding process

- Overview

- General decoding process

- Large scale tile decoding process

- Decode frame wrapup process

- Ordering of OBUs

- Random access decoding

- Frame end update CDF process

- Set frame refs process

- Motion field estimation process

- Motion vector prediction processes

- General

- Find MV stack process

- Setup global MV process

- Scan row process

- Scan col process

- Scan point process

- Temporal scan process

- Temporal sample process

- Add reference motion vector process

- Search stack process

- Compound search stack process

- Lower precision process

- Sorting process

- Extra search process

- Add extra MV candidate process

- Context and clamping process

- Has overlappable candidates process

- Find warp samples process

- Prediction processes

- General

- Intra prediction process

- General

- Basic intra prediction process

- Recursive intra prediction process

- Directional intra prediction process

- DC intra prediction process

- Smooth intra prediction process

- Filter corner process

- Intra filter type process

- Intra edge filter strength selection process

- Intra edge upsample selection process

- Intra edge upsample process

- Intra edge filter process

- Inter prediction process

- General

- Rounding variables derivation process

- Motion vector scaling process

- Block inter prediction process

- Block warp process

- Setup shear process

- Resolve divisor process

- Warp estimation process

- Overlapped motion compensation process

- Overlap blending process

- Wedge mask process

- Difference weight mask process

- Intra mode variant mask process

- Mask blend process

- Distance weights process

- Palette prediction process

- Predict chroma from luma process

- Reconstruction and dequantization

- Inverse transform process

- General

- 1D transforms

- Butterfly functions

- Inverse DCT array permutation process

- Inverse DCT process

- Inverse ADST input array permutation process

- Inverse ADST output array permutation process

- Inverse ADST4 process

- Inverse ADST8 process

- Inverse ADST16 process

- Inverse ADST process

- Inverse Walsh-Hadamard transform process

- Inverse identity transform 4 process

- Inverse identity transform 8 process

- Inverse identity transform 16 process

- Inverse identity transform 32 process

- Inverse identity transform process

- 2D inverse transform process

- Loop filter process

- CDEF process

- Upscaling process

- Loop restoration process

- Output process

- Motion field motion vector storage process

- Reference frame update process

- Reference frame loading process

- Parsing process

- Additional tables

- Annex A: Profiles and levels

- Annex B: Length delimited bitstream format

- Annex C: Error resilience behavior (informative)

- Annex D: Large scale tile use case (informative)

- Annex E: Decoder model

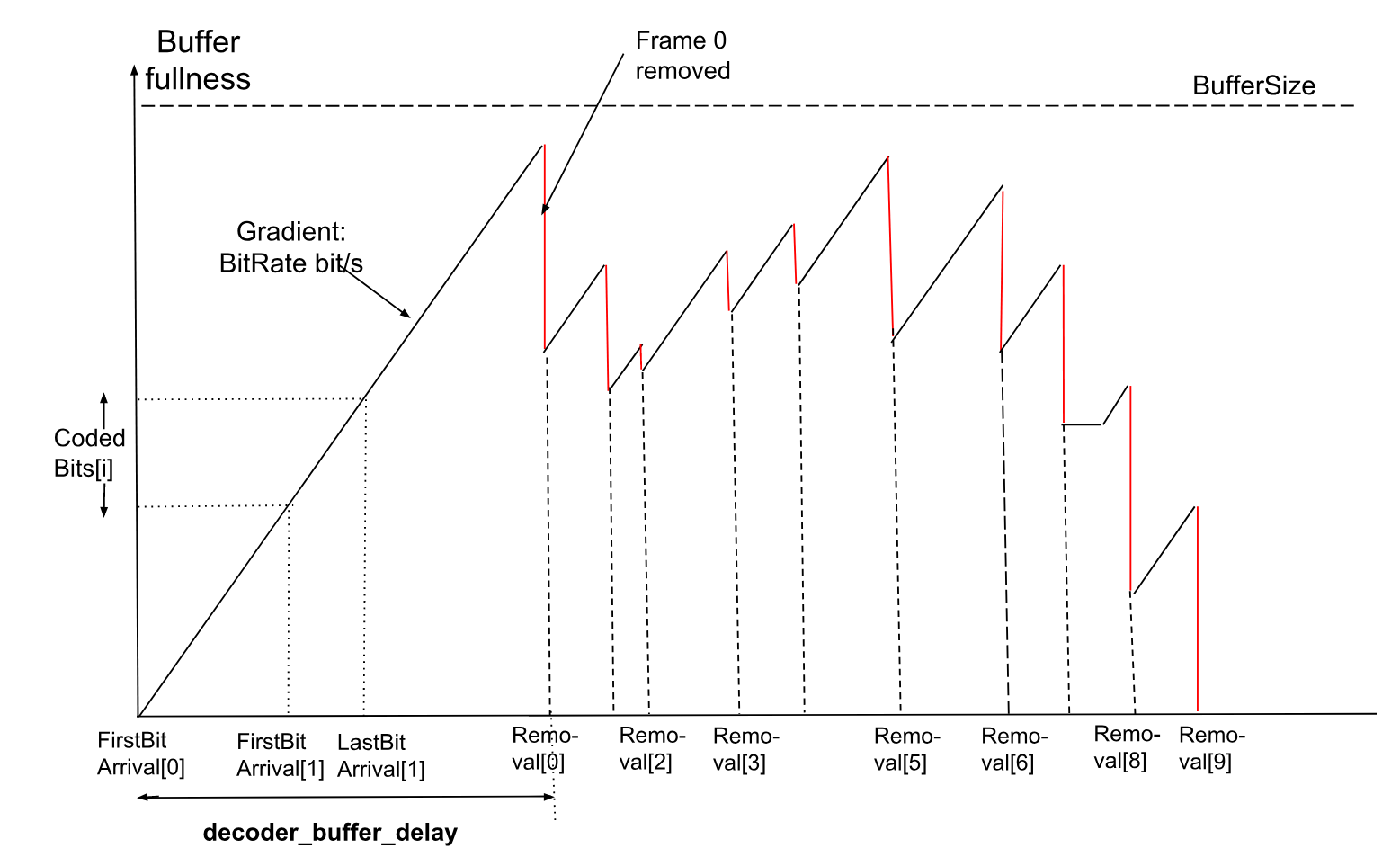

- General

- Decoder model definitions

- Operating modes

- Frame timing definitions

- Decoder model

- Bitstream conformance

- General

- Decoder buffer delay consistency across random access points (applies to decoding schedule mode)

- Smoothing buffer overflow

- Smoothing buffer underflow

- Minimum decode time (applies to decoding schedule mode)

- Minimum presentation Interval

- Decode deadline

- Level imposed constraints

- Decode Process constraints

- Bibliography

Scope

This document specifies the Alliance for Open Media AV1 bitstream formats and decoding process.

Terms and definitions

For the purposes of this document, the following terms and definitions apply:

- AC coefficient

-

Any transform coefficient whose frequency indices are non-zero in at least one dimension.

- Altref

-

(Alternative reference frame) A frame that can be used in inter coding.

- Base layer

-

The layer with spatial_id and temporal_id values equal to 0.

- Bitstream

-

The sequence of bits generated by encoding a sequence of frames.

- Bit string

-

An ordered string with limited number of bits. The left most bit is the most significant bit (MSB), the right most bit is the least significant bit (LSB).

- Block

-

A square or rectangular region of samples.

- Block scan

-

A specified serial ordering of quantized coefficients.

- Byte

-

An 8-bit bit string.

- Byte alignment

-

One bit is byte aligned if the position of the bit is an integer multiple of eight from the position of the first bit in the bitstream.

- CDEF

-

Constrained Directional Enhancement Filter designed to adaptively filter blocks based on identifying the direction.

- CDF

-

Cumulative distribution function representing the probability times 32768 that a symbol has value less than or equal to a given level.

- Chroma

-

A sample value matrix or a single sample value of one of the two color difference signals.

Note: Symbols of chroma are U and V.

- Coded frame

-

The representation of one frame before the decoding process.

- Component

-

One of the three sample value matrices (one luma matrix and two chroma matrices) or its single sample value.

- Compound prediction

-

A type of inter prediction where sample values are computed by blending together predictions from two reference frames (the frames blended can be the same or different).

- DC coefficient

-

A transform coefficient whose frequency indices are zero in both dimensions.

- Decoded frame

-

The frame reconstructed out of the bitstream by the decoder.

- Decoder

-

One embodiment of the decoding process.

- Decoding process

-

The process that derives decoded frames from syntax elements, including any processing steps used prior to and for the film grain synthesis process.

- Dequantization

-

The process in which transform coefficients are obtained by scaling the quantized coefficients.

- Encoder

-

One embodiment of the encoding process.

- Encoding process

-

A process not specified in this Specification that generates the bitstream that conforms to the description provided in this document.

- Enhancement layer

-

A layer with either spatial_id greater than 0 or temporal_id greater than 0.

- Flag

-

A binary variable - some variables and syntax elements (e.g. obu_extension_flag) are described using the word flag to highlight that the syntax element can only be equal to 0 or equal to 1.

- Frame

-

The representation of video signals in the spatial domain, composed of one luma sample matrix (Y) and two chroma sample matrices (U and V).

- Frame context

-

A set of probabilities used in the decoding process.

- Golden frame

-

A frame that can be used in inter coding. Typically the golden frame is encoded with higher quality and is used as a reference for multiple inter frames.

- Inter coding

-

Coding one block or frame using inter prediction.

- Inter frame

-

A frame compressed by referencing previously decoded frames and which may use intra prediction or inter prediction.

- Inter prediction

-

The process of deriving the prediction value for the current frame using previously decoded frames.

- Intra coding

-

Coding one block or frame using intra prediction.

- Intra frame

-

A frame compressed using only intra prediction which can be independently decoded.

- Intra prediction

-

The process of deriving the prediction value for the current sample using previously decoded sample values in the same decoded frame.

- Inverse transform

-

The process in which a transform coefficient matrix is transformed into a spatial sample value matrix.

- Key frame

-

An Intra frame which resets the decoding process when it is shown.

- Layer

-

A set of tile group OBUs with identical spatial_id and identical temporal_id values.

- Level

-

A defined set of constraints on the values for the syntax elements and variables.

- Loop filter

-

A filtering process applied to the reconstruction intended to reduce the visibility of block edges.

- Luma

-

A sample value matrix or a single sample value representing the monochrome signal related to the primary colors.

Note: The symbol representing luma is Y.

- Mode info

-

Syntax elements sent for a block containing an indication of how a block is to be predicted during the decoding process.

- Mode info block

-

A luma sample value block of size 4x4 or larger and its two corresponding chroma sample value blocks (if present).

- Motion vector

-

A two-dimensional vector used for inter prediction which refers the current frame to the reference frame, the value of which provides the coordinate offsets from a location in the current frame to a location in the reference frame.

- OBU

-

All structures are packetized in “Open Bitstream Units” or OBUs. Each OBU has a header, which provides identifying information for the contained data (payload).

- Parse

-

The procedure of getting the syntax element from the bitstream.

- Prediction

-

The implementation of the prediction process consisting of either inter or intra prediction.

- Prediction process

-

The process of estimating the decoded sample value or data element using a predictor.

- Prediction value

-

The value, which is the combination of the previously decoded sample values or data elements, used in the decoding process of the next sample value or data element.

- Profile

-

A subset of syntax, semantics and algorithms defined in a part.

- Quantization parameter

-

A variable used for scaling the quantized coefficients in the decoding process.

- Quantized coefficient

-

A transform coefficient before dequantization.

- Raster scan

-

Maps a two dimensional rectangular raster into a one dimensional raster, in which the entry of the one dimensional raster starts from the first row of the two dimensional raster, and the scanning then goes through the second row and the third row, and so on. Each raster row is scanned in left to right order.

- Reconstruction

-

Obtaining the addition of the decoded residual and the corresponding prediction values.

- Reference

-

One of a set of tags, each of which is mapped to a reference frame.

- Reference frame

-

A storage area for a previously decoded frame and associated information.

- Reserved

-

A special syntax element value which may be used to extend this part in the future.

- Residual

-

The differences between the reconstructed samples and the corresponding prediction values.

- Sample

-

The basic elements that compose the frame.

- Sample value

-

The value of a sample. This is an integer from 0 to 255 (inclusive) for 8-bit frames, from 0 to 1023 (inclusive) for 10-bit frames, and from 0 to 4095 (inclusive) for 12-bit frames.

- Segmentation map

-

One 3-bit number per 4x4 block in the frame specifying the segment affiliation of that block. A segmentation map is stored for each reference frame to allow new frames to use a previously coded map.

- Sequence

-

The highest level syntax structure of coding bitstream, including one or several consecutive coded frames.

- Superblock

-

The top level of the block quadtree within a tile. All superblocks within a frame are the same size and are square. The superblocks may be 128x128 luma samples or 64x64 luma samples. A superblock may contain 1 or 2 or 4 mode info blocks, or may be bisected in each direction to create 4 sub-blocks, which may themselves be further subpartitioned, forming the block quadtree.

- Switch Frame

-

An inter frame that can be used as a point to switch between sequences. Switch frames overwrite all the reference frames without forcing the use of intra coding. The intention is to allow a streaming use case where videos can be encoded in small chunks (say of 1 second duration), each starting with a switch frame. If the available bandwidth drops, the server can start sending chunks from a lower bitrate encoding instead. When this happens the inter prediction uses the existing higher quality reference frames to decode the switch frame. This approach allows a bitrate switch without the cost of a full key frame.

- Syntax element

-

An element of data represented in the bitstream.

- Temporal delimiter OBU

-

An indication that the following OBUs will have a different presentation/decoding time stamp from the one of the last frame prior to the temporal delimiter.

- Temporal unit

-

A Temporal unit consists of all the OBUs that are associated with a specific, distinct time instant. It consists of a temporal delimiter OBU, and all the OBUs that follow, up to but not including the next temporal delimiter.

- Temporal group

-

A set of frames whose temporal prediction structure is used periodically in a video sequence.

- Tier

-

A specified category of level constraints imposed on the values of the syntax elements in the bitstream.

- Tile

-

A rectangular region of the frame that can be decoded and encoded independently, although loop-filtering across tile edges is still applied.

- Transform block

-

A rectangular transform coefficient matrix, used as input to the inverse transform process.

- Transform coefficient

-

A scalar value, considered to be in a frequency domain, contained in a transform block.

- Uncompressed header

-

High level description of the frame to be decoded that is encoded without the use of arithmetic encoding.

Symbols and abbreviated terms

- DCT

-

Discrete Cosine Transform

- ADST

-

Asymmetric Discrete Sine Transform

- LSB

-

Least Significant Bit

- MSB

-

Most Significant Bit

- WHT

-

Walsh Hadamard Transform

The specification makes use of a number of constant integers. Constants that relate to the semantics of a particular syntax element are defined in section 6.

Additional constants are defined below:

| Symbol name | Value | Description |

|---|---|---|

REFS_PER_FRAME |

7 | Number of reference frames that can be used for inter prediction |

TOTAL_REFS_PER_FRAME |

8 | Number of reference frame types (including intra type) |

BLOCK_SIZE_GROUPS |

4 | Number of contexts when decoding y_mode |

BLOCK_SIZES |

22 | Number of different block sizes used |

BLOCK_INVALID |

22 | Sentinel value to mark partition choices that are not allowed |

MAX_SB_SIZE |

128 | Maximum size of a superblock in luma samples |

MI_SIZE |

4 | Smallest size of a mode info block in luma samples |

MI_SIZE_LOG2 |

2 | Base 2 logarithm of smallest size of a mode info block |

MAX_TILE_WIDTH |

4096 | Maximum width of a tile in units of luma samples |

MAX_TILE_AREA |

4096 * 2304 | Maximum area of a tile in units of luma samples |

MAX_TILE_ROWS |

64 | Maximum number of tile rows |

MAX_TILE_COLS |

64 | Maximum number of tile columns |

INTRABC_DELAY_PIXELS |

256 | Number of horizontal luma samples before intra block copy can be used |

INTRABC_DELAY_SB64 |

4 | Number of 64 by 64 blocks before intra block copy can be used |

NUM_REF_FRAMES |

8 | Number of frames that can be stored for future reference |

IS_INTER_CONTEXTS |

4 | Number of contexts for is_inter |

REF_CONTEXTS |

3 | Number of contexts for single_ref, comp_ref, comp_bwdref, uni_comp_ref, uni_comp_ref_p1 and uni_comp_ref_p2 |

MAX_SEGMENTS |

8 | Number of segments allowed in segmentation map |

SEGMENT_ID_CONTEXTS |

3 | Number of contexts for segment_id |

SEG_LVL_ALT_Q |

0 | Index for quantizer segment feature |

SEG_LVL_ALT_LF_Y_V |

1 | Index for vertical luma loop filter segment feature |

SEG_LVL_REF_FRAME |

5 | Index for reference frame segment feature |

SEG_LVL_SKIP |

6 | Index for skip segment feature |

SEG_LVL_GLOBALMV |

7 | Index for global mv feature |

SEG_LVL_MAX |

8 | Number of segment features |

PLANE_TYPES |

2 | Number of different plane types (luma or chroma) |

TX_SIZE_CONTEXTS |

3 | Number of contexts for transform size |

INTERP_FILTERS |

3 | Number of values for interp_filter |

INTERP_FILTER_CONTEXTS |

16 | Number of contexts for interp_filter |

SKIP_MODE_CONTEXTS |

3 | Number of contexts for decoding skip_mode |

SKIP_CONTEXTS |

3 | Number of contexts for decoding skip |

PARTITION_CONTEXTS |

4 | Number of contexts when decoding partition |

TX_SIZES |

5 | Number of square transform sizes |

TX_SIZES_ALL |

19 | Number of transform sizes (including non-square sizes) |

TX_MODES |

3 | Number of values for tx_mode |

DCT_DCT |

0 | Inverse transform rows with DCT and columns with DCT |

ADST_DCT |

1 | Inverse transform rows with DCT and columns with ADST |

DCT_ADST |

2 | Inverse transform rows with ADST and columns with DCT |

ADST_ADST |

3 | Inverse transform rows with ADST and columns with ADST |

FLIPADST_DCT |

4 | Inverse transform rows with DCT and columns with FLIPADST |

DCT_FLIPADST |

5 | Inverse transform rows with FLIPADST and columns with DCT |

FLIPADST_FLIPADST |

6 | Inverse transform rows with FLIPADST and columns with FLIPADST |

ADST_FLIPADST |

7 | Inverse transform rows with FLIPADST and columns with ADST |

FLIPADST_ADST |

8 | Inverse transform rows with ADST and columns with FLIPADST |

IDTX |

9 | Inverse transform rows with identity and columns with identity |

V_DCT |

10 | Inverse transform rows with identity and columns with DCT |

H_DCT |

11 | Inverse transform rows with DCT and columns with identity |

V_ADST |

12 | Inverse transform rows with identity and columns with ADST |

H_ADST |

13 | Inverse transform rows with ADST and columns with identity |

V_FLIPADST |

14 | Inverse transform rows with identity and columns with FLIPADST |

H_FLIPADST |

15 | Inverse transform rows with FLIPADST and columns with identity |

TX_TYPES |

16 | Number of inverse transform types |

MB_MODE_COUNT |

17 | Number of values for YMode |

INTRA_MODES |

13 | Number of values for y_mode |

UV_INTRA_MODES_CFL_NOT_ALLOWED |

13 | Number of values for uv_mode when chroma from luma is not allowed |

UV_INTRA_MODES_CFL_ALLOWED |

14 | Number of values for uv_mode when chroma from luma is allowed |

COMPOUND_MODES |

8 | Number of values for compound_mode |

COMPOUND_MODE_CONTEXTS |

8 | Number of contexts for compound_mode |

COMP_NEWMV_CTXS |

5 | Number of new mv values used when constructing context for compound_mode |

NEW_MV_CONTEXTS |

6 | Number of contexts for new_mv |

ZERO_MV_CONTEXTS |

2 | Number of contexts for zero_mv |

REF_MV_CONTEXTS |

6 | Number of contexts for ref_mv |

DRL_MODE_CONTEXTS |

3 | Number of contexts for drl_mode |

MV_CONTEXTS |

2 | Number of contexts for decoding motion vectors including one for intra block copy |

MV_INTRABC_CONTEXT |

1 | Motion vector context used for intra block copy |

MV_JOINTS |

4 | Number of values for mv_joint |

MV_CLASSES |

11 | Number of values for mv_class |

CLASS0_SIZE |

2 | Number of values for mv_class0_bit |

MV_OFFSET_BITS |

10 | Maximum number of bits for decoding motion vectors |

MAX_LOOP_FILTER |

63 | Maximum value used for loop filtering |

REF_SCALE_SHIFT |

14 | Number of bits of precision when scaling reference frames |

SUBPEL_BITS |

4 | Number of bits of precision when choosing an inter prediction filter kernel |

SUBPEL_MASK |

15 | ( 1 << SUBPEL_BITS ) - 1 |

SCALE_SUBPEL_BITS |

10 | Number of bits of precision when computing inter prediction locations |

MV_BORDER |

128 | Value used when clipping motion vectors |

PALETTE_COLOR_CONTEXTS |

5 | Number of values for color contexts |

PALETTE_MAX_COLOR_CONTEXT_HASH |

8 | Number of mappings between color context hash and color context |

PALETTE_BLOCK_SIZE_CONTEXTS |

7 | Number of values for palette block size |

PALETTE_Y_MODE_CONTEXTS |

3 | Number of values for palette Y plane mode contexts |

PALETTE_UV_MODE_CONTEXTS |

2 | Number of values for palette U and V plane mode contexts |

PALETTE_SIZES |

7 | Number of values for palette_size |

PALETTE_COLORS |

8 | Number of values for palette_color |

PALETTE_NUM_NEIGHBORS |

3 | Number of neighbors considered within palette computation |

DELTA_Q_SMALL |

3 | Value indicating alternative encoding of quantizer index delta values |

DELTA_LF_SMALL |

3 | Value indicating alternative encoding of loop filter delta values |

QM_TOTAL_SIZE |

3344 | Number of values in the quantizer matrix |

MAX_ANGLE_DELTA |

3 | Maximum magnitude of AngleDeltaY and AngleDeltaUV |

DIRECTIONAL_MODES |

8 | Number of directional intra modes |

ANGLE_STEP |

3 | Number of degrees of step per unit increase in AngleDeltaY or AngleDeltaUV. |

TX_SET_TYPES_INTRA |

3 | Number of intra transform set types |

TX_SET_TYPES_INTER |

4 | Number of inter transform set types |

WARPEDMODEL_PREC_BITS |

16 | Internal precision of warped motion models |

IDENTITY |

0 | Warp model is just an identity transform |

TRANSLATION |

1 | Warp model is a pure translation |

ROTZOOM |

2 | Warp model is a rotation + symmetric zoom + translation |

AFFINE |

3 | Warp model is a general affine transform |

GM_ABS_TRANS_BITS |

12 | Number of bits encoded for translational components of global motion models, if part of a ROTZOOM or AFFINE model |

GM_ABS_TRANS_ONLY_BITS |

9 | Number of bits encoded for translational components of global motion models, if part of a TRANSLATION model |

GM_ABS_ALPHA_BITS |

12 | Number of bits encoded for non-translational components of global motion models |

DIV_LUT_PREC_BITS |

14 | Number of fractional bits of entries in divisor lookup table |

DIV_LUT_BITS |

8 | Number of fractional bits for lookup in divisor lookup table |

DIV_LUT_NUM |

257 | Number of entries in divisor lookup table |

MOTION_MODES |

3 | Number of values for motion modes |

SIMPLE |

0 | Use translation or global motion compensation |

OBMC |

1 | Use overlapped block motion compensation |

LOCALWARP |

2 | Use local warp motion compensation |

LEAST_SQUARES_SAMPLES_MAX |

8 | Largest number of samples used when computing a local warp |

LS_MV_MAX |

256 | Largest motion vector difference to include in local warp computation |

WARPEDMODEL_TRANS_CLAMP |

1<<23 | Clamping value used for translation components of warp |

WARPEDMODEL_NONDIAGAFFINE_CLAMP |

1<<13 | Clamping value used for matrix components of warp |

WARPEDPIXEL_PREC_SHIFTS |

1<<6 | Number of phases used in warped filtering |

WARPEDDIFF_PREC_BITS |

10 | Number of extra bits of precision in warped filtering |

GM_ALPHA_PREC_BITS |

15 | Number of fractional bits for sending non-translational warp model coefficients |

GM_TRANS_PREC_BITS |

6 | Number of fractional bits for sending translational warp model coefficients |

GM_TRANS_ONLY_PREC_BITS |

3 | Number of fractional bits used for pure translational warps |

INTERINTRA_MODES |

4 | Number of inter intra modes |

MASK_MASTER_SIZE |

64 | Size of MasterMask array |

SEGMENT_ID_PREDICTED_CONTEXTS |

3 | Number of contexts for segment_id_predicted |

IS_INTER_CONTEXTS |

4 | Number of contexts for is_inter |

FWD_REFS |

4 | Number of syntax elements for forward reference frames |

BWD_REFS |

3 | Number of syntax elements for backward reference frames |

SINGLE_REFS |

7 | Number of syntax elements for single reference frames |

UNIDIR_COMP_REFS |

4 | Number of syntax elements for unidirectional compound reference frames |

COMPOUND_TYPES |

2 | Number of values for compound_type |

CFL_JOINT_SIGNS |

8 | Number of values for cfl_alpha_signs |

CFL_ALPHABET_SIZE |

16 | Number of values for cfl_alpha_u and cfl_alpha_v |

COMP_INTER_CONTEXTS |

5 | Number of contexts for comp_mode |

COMP_REF_TYPE_CONTEXTS |

5 | Number of contexts for comp_ref_type |

CFL_ALPHA_CONTEXTS |

6 | Number of contexts for cfl_alpha_u and cfl_alpha_v |

INTRA_MODE_CONTEXTS |

5 | Number of each of left and above contexts for intra_frame_y_mode |

COMP_GROUP_IDX_CONTEXTS |

6 | Number of contexts for comp_group_idx |

COMPOUND_IDX_CONTEXTS |

6 | Number of contexts for compound_idx |

INTRA_EDGE_KERNELS |

3 | Number of filter kernels for the intra edge filter |

INTRA_EDGE_TAPS |

5 | Number of kernel taps for the intra edge filter |

FRAME_LF_COUNT |

4 | Number of loop filter strength values |

MAX_VARTX_DEPTH |

2 | Maximum depth for variable transform trees |

TXFM_PARTITION_CONTEXTS |

21 | Number of contexts for txfm_split |

REF_CAT_LEVEL |

640 | Bonus weight for close motion vectors |

MAX_REF_MV_STACK_SIZE |

8 | Maximum number of motion vectors in the stack |

MFMV_STACK_SIZE |

3 | Stack size for motion field motion vectors |

MAX_TX_DEPTH |

2 | Maximum times the transform can be split |

WEDGE_TYPES |

16 | Number of directions for the wedge mask process |

FILTER_BITS |

7 | Number of bits used in Wiener filter coefficients |

WIENER_COEFFS |

3 | Number of Wiener filter coefficients to read |

SGRPROJ_PARAMS_BITS |

4 | Number of bits needed to specify self guided filter set |

SGRPROJ_PRJ_SUBEXP_K |

4 | Controls how self guided deltas are read |

SGRPROJ_PRJ_BITS |

7 | Precision bits during self guided restoration |

SGRPROJ_RST_BITS |

4 | Restoration precision bits generated higher than source before projection |

SGRPROJ_MTABLE_BITS |

20 | Precision of mtable division table |

SGRPROJ_RECIP_BITS |

12 | Precision of division by n table |

SGRPROJ_SGR_BITS |

8 | Internal precision bits for core selfguided_restoration |

EC_PROB_SHIFT |

6 | Number of bits to reduce CDF precision during arithmetic coding |

EC_MIN_PROB |

4 | Minimum probability assigned to each symbol during arithmetic coding |

SELECT_SCREEN_CONTENT_TOOLS |

2 | Value that indicates the allow_screen_content_tools syntax element is coded |

SELECT_INTEGER_MV |

2 | Value that indicates the force_integer_mv syntax element is coded |

RESTORATION_TILESIZE_MAX |

256 | Maximum size of a loop restoration tile |

MAX_FRAME_DISTANCE |

31 | Maximum distance when computing weighted prediction |

MAX_OFFSET_WIDTH |

8 | Maximum horizontal offset of a projected motion vector |

MAX_OFFSET_HEIGHT |

0 | Maximum vertical offset of a projected motion vector |

WARP_PARAM_REDUCE_BITS |

6 | Rounding bitwidth for the parameters to the shear process |

NUM_BASE_LEVELS |

2 | Number of quantizer base levels |

COEFF_BASE_RANGE |

12 | The quantizer range above NUM_BASE_LEVELS above which the Exp-Golomb coding process is activated |

BR_CDF_SIZE |

4 | Number of values for coeff_br |

SIG_COEF_CONTEXTS_EOB |

4 | Number of contexts for coeff_base_eob |

SIG_COEF_CONTEXTS_2D |

26 | Context offset for coeff_base for horizontal-only or vertical-only transforms. |

SIG_COEF_CONTEXTS |

42 | Number of contexts for coeff_base |

SIG_REF_DIFF_OFFSET_NUM |

5 | Maximum number of context samples to be used in determining the context index for coeff_base and coeff_base_eob. |

SUPERRES_NUM |

8 | Numerator for upscaling ratio |

SUPERRES_DENOM_MIN |

9 | Smallest denominator for upscaling ratio |

SUPERRES_DENOM_BITS |

3 | Number of bits sent to specify denominator of upscaling ratio |

SUPERRES_FILTER_BITS |

6 | Number of bits of fractional precision for upscaling filter selection |

SUPERRES_FILTER_SHIFTS |

1 << SUPERRES_FILTER_BITS | Number of phases of upscaling filters |

SUPERRES_FILTER_TAPS |

8 | Number of taps of upscaling filters |

SUPERRES_FILTER_OFFSET |

3 | Sample offset for upscaling filters |

SUPERRES_SCALE_BITS |

14 | Number of fractional bits for computing position in upscaling |

SUPERRES_SCALE_MASK |

(1 << 14) - 1 | Mask for computing position in upscaling |

SUPERRES_EXTRA_BITS |

8 | Difference in precision between SUPERRES_SCALE_BITS and SUPERRES_FILTER_BITS |

TXB_SKIP_CONTEXTS |

13 | Number of contexts for all_zero |

EOB_COEF_CONTEXTS |

9 | Number of contexts for eob_extra |

DC_SIGN_CONTEXTS |

3 | Number of contexts for dc_sign |

LEVEL_CONTEXTS |

21 | Number of contexts for coeff_br |

TX_CLASS_2D |

0 | Transform class for transform types performing non-identity transforms in both directions |

TX_CLASS_HORIZ |

1 | Transform class for transforms performing only a horizontal non-identity transform |

TX_CLASS_VERT |

2 | Transform class for transforms performing only a vertical non-identity transform |

REFMVS_LIMIT |

( 1 << 12 ) - 1 | Largest reference MV component that can be saved |

INTRA_FILTER_SCALE_BITS |

4 | Scaling shift for intra filtering process |

INTRA_FILTER_MODES |

5 | Number of types of intra filtering |

COEFF_CDF_Q_CTXS |

4 | Number of selectable context types for the coeff( ) syntax structure |

PRIMARY_REF_NONE |

7 | Value of primary_ref_frame indicating that there is no primary reference frame |

BUFFER_POOL_MAX_SIZE |

10 | Number of frames in buffer pool |

Conventions

General

The mathematical operators and their precedence rules used to describe this Specification are similar to those used in the C programming language. However, the operation of integer division with truncation is specifically defined.

In addition, a length 2 array used to hold a motion vector (indicated

by the variable name ending with the letters Mv or Mvs) can be accessed

using either array notation (e.g. Mv[ 0 ] and Mv[ 1 ]), or by just

the name (e.g., Mv). The only operations defined when using the name are

assignment and equality/inequality testing. Assignment of an array is

represented using the notation A = B and is specified to mean the same

as doing both the individual assignments A[ 0 ] = B[ 0 ] and

A[ 1 ] = B[ 1 ]. Equality testing of 2 motion vectors is represented using the

notation A == B and is specified to mean the same as

(A[ 0 ] == B[ 0 ] && A[ 1 ] == B[ 1 ]). Inequality testing is defined as

A != B and is specified to mean the same as

(A[ 0 ] != B[ 0 ] || A[ 1 ] != B[ 1 ]).

When a variable is said to be representable by a signed integer with x bits,

it means that the variable is greater than or equal to -(1 << (x-1)), and that

the variable is less than or equal to (1 << (x-1))-1.

The key words “must”, “must not”, “required”, “shall”, “shall not”, “should”, “should not”, “recommended”, “may”, and “optional” in this document are to be interpreted as described in RFC 2119.

Arithmetic operators

| + | Addition |

| – | Subtraction (as a binary operator) or negation (as a unary prefix operator) |

| * | Multiplication |

| / | Integer division with truncation of the result toward zero. For example, 7/4 and -7/-4 are truncated to 1 and -7/4 and 7/-4 are truncated to -1. |

| a % b | Remainder from division of a by b. Both a and b are positive integers. |

| ÷ | Floating point (arithmetical) division. |

| ceil(x) | The smallest integer that is greater or equal than x. |

| floor(x) | The largest integer that is smaller or equal than x. |

Logical operators

| a && b | Logical AND operation between a and b |

| a || b | Logical OR operation between a and b |

| ! | Logical NOT operation. |

Relational operators

| > | Greater than |

| >= | Greater than or equal to |

| < | Less than |

| <= | Less than or equal to |

| == | Equal to |

| != | Not equal to |

Bitwise operators

| & | AND operation |

| | | OR operation |

| ^ | XOR operation |

| ~ | Negation operation |

| a >> b | Shift a in 2’s complement binary integer representation format to the right by b bit positions. This operator is only used with b being a non-negative integer. Bits shifted into the MSBs as a result of the right shift have a value equal to the MSB of a prior to the shift operation. |

| a << b | Shift a in 2’s complement binary integer representation format to the left by b bit positions. This operator is only used with b being a non-negative integer. Bits shifted into the LSBs as a result of the left shift have a value equal to 0. |

Assignment

| = | Assignment operator |

| ++ | Increment, x++ is equivalent to x = x + 1. When this operator is used for an array index, the variable value is obtained before the auto increment operation |

| - - | Decrement, i.e. x-- is equivalent to x = x - 1. When this operator is used for an array index, the variable value is obtained before the auto decrement operation |

| += | Addition assignment operator, for example x += 3 corresponds to x = x + 3 |

| -= | Subtraction assignment operator, for example x -= 3 corresponds to x = x - 3 |

Mathematical functions

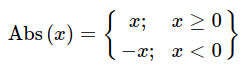

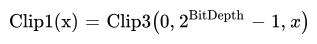

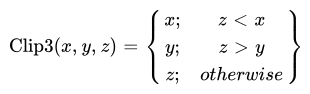

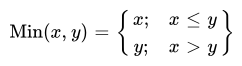

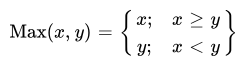

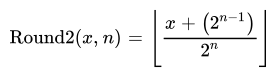

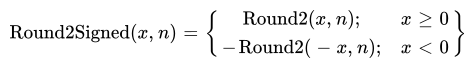

The following mathematical functions (Abs, Clip3, Clip1, Min, Max, Round2 and Round2Signed) are defined as follows:

The definition of Round2 uses standard mathematical power and division operations, not integer operations. An equivalent definition using integer operations is:

Round2( x, n ) {

if ( n == 0 )

return x

return ( x + ( 1 << (n - 1) ) ) >> n

}

The FloorLog2(x) function is defined to be the floor of the base 2 logarithm of the input x.

The input x will always be an integer, and will always be greater than or equal to 1.

This function extracts the location of the most significant bit in x.

An equivalent definition (using the pseudo-code notation introduced in the following section) is:

FloorLog2( x ) {

s = 0

while ( x != 0 ) {

x = x >> 1

s++

}

return s - 1

}

The CeilLog2(x) function is defined to be the ceiling of the base 2 logarithm of the input x (when x is 0, it is defined to return 0).

The input x will always be an integer, and will always be greater than or equal to 0.

This function extracts the number of bits needed to code a value in the range 0 to x-1.

An equivalent definition (using the pseudo-code notation introduced in the following section) is:

CeilLog2( x ) {

if ( x < 2 )

return 0

i = 1

p = 2

while ( p < x ) {

i++

p = p << 1

}

return i

}

Method of describing bitstream syntax

The description style of the syntax is similar to the C programming language. Syntax elements in the bitstream are represented in bold type. Each syntax element is described by its name (using only lower case letters with underscore characters) and a descriptor for its method of coded representation. The decoding process behaves according to the value of the syntax element and to the values of previously decoded syntax elements. When a value of a syntax element is used in the syntax tables or the text, it appears in regular (i.e. not bold) type. If the value of a syntax element is being computed (e.g. being written with a default value instead of being coded in the bitstream), it also appears in regular type (e.g. tile_size_minus_1).

In some cases the syntax tables may use the values of other variables derived from syntax elements values. Such variables appear in the syntax tables, or text, named by a mixture of lower case and upper case letter and without any underscore characters. Variables starting with an upper case letter are derived for the decoding of the current syntax structure and all depending syntax structures. These variables may be used in the decoding process for later syntax structures. Variables starting with a lower case letter are only used within the process from which they are derived. (Single character variables are allowed.)

Constant values appear in all upper case letters with underscore characters (e.g. MI_SIZE).

Constant lookup tables appear as words (with the first letter of each word in upper case, and remaining letters in lower case) separated with underscore characters (e.g. Block_Width[…]).

Hexadecimal notation, indicated by prefixing the hexadecimal number by 0x,

may be used when the number of bits is an integer multiple of 4. For example,

0x1a represents a bit string 0001 1010.

Binary notation is indicated by prefixing the binary number by 0b. For

example, 0b00011010 represents a bit string 0001 1010. Binary numbers may

include underscore characters to enhance readability. If present, the

underscore characters appear every 4 binary digits starting from the LSB. For

example, 0b11010 may also be written as 0b1_1010.

A value equal to 0 represents a FALSE condition in a test statement. The value TRUE is represented by any value not equal to 0.

The following table lists examples of the syntax specification format. When

syntax_element appears (with bold face font), it specifies that this syntax

element is parsed from the bitstream.

| Type | |

| /* A statement can be a syntax element with associated | |

| descriptor or can be an expression used to specify its | |

| existence, type, and value, as in the following | |

| examples */ | |

| syntax_element | f(1) |

| /* A group of statements enclosed in brackets is a | |

| compound statement and is treated functionally as a single | |

| statement. */ | |

| { | |

| statement | |

| … | |

| } | |

| /* A “while” structure specifies that the statement is | |

| to be evaluated repeatedly while the condition remains | |

| true. */ | |

| while ( condition ) | |

| statement | |

| /* A “do .. while” structure executes the statement once, | |

| and then tests the condition. It repeatedly evaluates the | |

| statement while the condition remains true. */ | |

| do | |

| statement | |

| while ( condition ) | |

| /* An “if .. else” structure tests the condition first. If | |

| it is true, the primary statement is evaluated. Otherwise, | |

| the alternative statement is evaluated. If the alternative | |

| statement is unnecessary to be evaluated, the “else” and | |

| corresponding alternative statement can be omitted. */ | |

| if ( condition ) | |

| primary statement | |

| else | |

| alternative statement | |

| /* A “for” structure evaluates the initial statement at the | |

| beginning then tests the condition. If it is true, the primary | |

| and subsequent statements are evaluated until the condition | |

| becomes false. */ | |

| for ( initial statement; condition; subsequent statement ) | |

| primary statement | |

| /* The return statement in a syntax structure specifies | |

| that the parsing of the syntax structure will be terminated | |

| without processing any additional information after this stage. | |

| When a value immediately follows a return statement, this value | |

| shall also be returned as the output of this syntax structure. */ | |

| return x |

Functions

Bitstream functions used for syntax description are specified in this section.

Other functions are included in the syntax tables. The convention is that a section is called syntax if it causes syntax elements to be read from the bitstream, either directly or indirectly through subprocesses. The remaining sections are called functions.

The specification of these functions makes use of a bitstream position indicator. This bitstream position indicator locates the position of the bit that is going to be read next.

get_position( ): Return the value of the bitstream position indicator.

init_symbol( sz ): Initialize the arithmetic decode process for the Symbol decoder with a size of sz bytes as specified in section 8.2.2.

exit_symbol( ): Exit the arithmetic decode process as described in section 8.2.4 (this includes reading trailing bits).

Descriptors

General

The following descriptors specify the parsing of syntax elements. Lower case descriptors specify syntax elements that are represented by an integer number of bits in the bitstream; upper case descriptors specify syntax elements that are represented by arithmetic coding.

f(n)

Unsigned n-bit number appearing directly in the bitstream. The bits are read from high to low order. The parsing process specified in section 8.1 is invoked and the syntax element is set equal to the return value.

uvlc()

Variable length unsigned number appearing directly in the bitstream. The parsing process for this descriptor is specified below:

| uvlc() { | Type |

| leadingZeros = 0 | |

| while ( 1 ) { | |

| done | f(1) |

| if ( done ) | |

| break | |

| leadingZeros++ | |

| } | |

| if ( leadingZeros >= 32 ) { | |

| return ( 1 << 32 ) - 1 | |

| } | |

| value | f(leadingZeros) |

| return value + ( 1 << leadingZeros ) - 1 | |

| } |

le(n)

Unsigned little-endian n-byte number appearing directly in the bitstream. The parsing process for this descriptor is specified below:

| le(n) { | Type |

| t = 0 | |

| for ( i = 0; i < n; i++) { | |

| byte | f(8) |

| t += ( byte << ( i * 8 ) ) | |

| } | |

| return t | |

| } |

Note: This syntax element will only be present when the bitstream position is byte aligned.

leb128()

Unsigned integer represented by a variable number of little-endian bytes.

Note: This syntax element will only be present when the bitstream position is byte aligned.

In this encoding, the most significant bit of each byte is equal to 1 to signal that more bytes should be read, or equal to 0 to signal the end of the encoding.

A variable Leb128Bytes is set equal to the number of bytes read during this process.

The parsing process for this descriptor is specified below:

| leb128() { | Type |

| value = 0 | |

| Leb128Bytes = 0 | |

| for ( i = 0; i < 8; i++ ) { | |

| leb128_byte | f(8) |

| value |= ( (leb128_byte & 0x7f) << (i*7) ) | |

| Leb128Bytes += 1 | |

| if ( !(leb128_byte & 0x80) ) { | |

| break | |

| } | |

| } | |

| return value | |

| } |

It is a requirement of bitstream conformance that the value returned from the leb128 parsing process is less than or equal to (1 << 32) - 1.

leb128_byte contains 8 bits read from the bitstream. The bottom 7 bits are used to compute the variable value. The most significant bit is used to indicate that there are more bytes to be read.

It is a requirement of bitstream conformance that the most significant bit of leb128_byte is equal to 0 if i is equal to 7. (This ensures that this syntax descriptor never uses more than 8 bytes.)

Note: There are multiple ways of encoding the same value depending on how many leading zero bits are encoded. There is no requirement that this syntax descriptor uses the most compressed representation. This can be useful for encoder implementations by allowing a fixed amount of space to be filled in later when the value becomes known.

su(n)

Signed integer converted from an n bits unsigned integer in the bitstream. (The unsigned integer corresponds to the bottom n bits of the signed integer.) The parsing process for this descriptor is specified below:

| su(n) { | Type |

| value | f(n) |

| signMask = 1 << (n - 1) | |

| if ( value & signMask ) | |

| value = value - 2 * signMask | |

| return value | |

| } |

ns(n)

Unsigned encoded integer with maximum number of values n (i.e. output in range 0..n-1).

This descriptor is similar to f(CeilLog2(n)), but reduces wastage incurred when encoding non-power of two value ranges by encoding 1 fewer bits for the lower part of the value range. For example, when n is equal to 5, the encodings are as follows (full binary encodings are also presented for comparison):

| Value | Full binary encoding | ns(n) encoding |

|---|---|---|

| 0 | 000 | 00 |

| 1 | 001 | 01 |

| 2 | 010 | 10 |

| 3 | 011 | 110 |

| 4 | 100 | 111 |

The parsing process for this descriptor is specified as:

| ns( n ) { | Type |

| w = FloorLog2(n) + 1 | |

| m = (1 << w) - n | |

| v | f(w - 1) |

| if ( v < m ) | |

| return v | |

| extra_bit | f(1) |

| return (v << 1) - m + extra_bit | |

| } |

The abbreviation ns stands for non-symmetric. This encoding is non-symmetric because the values are not all coded with the same number of bits.

L(n)

Unsigned arithmetic encoded n-bit number encoded as n flags (a “literal”). The flags are read from high to low order. The syntax element is set equal to the return value of read_literal( n ) (see section 8.2.5 for a specification of this process).

S()

An arithmetic encoded symbol coded from a small alphabet of at most 16 entries.

The symbol is decoded based on a context sensitive CDF (see section 8.3 for the specification of this process).

NS(n)

Unsigned arithmetic encoded integer with maximum number of values n (i.e. output in range 0..n-1).

This descriptor is the same as ns(n) except the underlying bits are coded arithmetically.

The parsing process for this descriptor is specified as:

| NS( n ) { | Type |

| w = FloorLog2(n) + 1 | |

| m = (1 << w) - n | |

| v | L(w - 1) |

| if ( v < m ) | |

| return v | |

| extra_bit | L(1) |

| return (v << 1) - m + extra_bit | |

| } |

Syntax structures

General

This section presents the syntax structures in a tabular form. The meaning of each of the syntax elements is presented in Section 6.

Low overhead bitstream format

This specification defines a low-overhead bitstream format as a sequence of the OBU syntactical elements defined in this section. When using this format, obu_has_size_field must be equal to 1. For applications requiring a format where it is easier to skip through frames or temporal units, a length-delimited bitstream format is defined in Annex B.

Derived specifications, such as container formats enabling storage of AV1 videos together with audio or subtitles, should indicate which of these formats they rely on. Other methods of packing OBUs into a bitstream format are also allowed.

OBU syntax

General OBU syntax

| open_bitstream_unit( sz ) { | Type |

| obu_header() | |

| if ( obu_has_size_field ) { | |

| obu_size | leb128() |

| } else { | |

| obu_size = sz - 1 - obu_extension_flag | |

| } | |

| startPosition = get_position( ) | |

| if ( obu_type != OBU_SEQUENCE_HEADER && | |

| obu_type != OBU_TEMPORAL_DELIMITER && | |

| OperatingPointIdc != 0 && | |

| obu_extension_flag == 1 ) | |

| { | |

| inTemporalLayer = (OperatingPointIdc >> temporal_id ) & 1 | |

| inSpatialLayer = (OperatingPointIdc >> ( spatial_id + 8 ) ) & 1 | |

| if ( !inTemporalLayer || ! inSpatialLayer ) { | |

| drop_obu( ) | |

| return | |

| } | |

| } | |

| if ( obu_type == OBU_SEQUENCE_HEADER ) | |

| sequence_header_obu( ) | |

| else if ( obu_type == OBU_TEMPORAL_DELIMITER ) | |

| temporal_delimiter_obu( ) | |

| else if ( obu_type == OBU_FRAME_HEADER ) | |

| frame_header_obu( ) | |

| else if ( obu_type == OBU_REDUNDANT_FRAME_HEADER ) | |

| frame_header_obu( ) | |

| else if ( obu_type == OBU_TILE_GROUP ) | |

| tile_group_obu( obu_size ) | |

| else if ( obu_type == OBU_METADATA ) | |

| metadata_obu( ) | |

| else if ( obu_type == OBU_FRAME ) | |

| frame_obu( obu_size ) | |

| else if ( obu_type == OBU_TILE_LIST ) | |

| tile_list_obu( ) | |

| else if ( obu_type == OBU_PADDING ) | |

| padding_obu( ) | |

| else | |

| reserved_obu( ) | |

| currentPosition = get_position( ) | |

| payloadBits = currentPosition - startPosition | |

| if ( obu_size > 0 && obu_type != OBU_TILE_GROUP && | |

| obu_type != OBU_TILE_LIST && | |

| obu_type != OBU_FRAME ) { | |

| trailing_bits( obu_size * 8 - payloadBits ) | |

| } | |

| } |

OBU header syntax

| obu_header() { | Type |

| obu_forbidden_bit | f(1) |

| obu_type | f(4) |

| obu_extension_flag | f(1) |

| obu_has_size_field | f(1) |

| obu_reserved_1bit | f(1) |

| if ( obu_extension_flag == 1 ) | |

| obu_extension_header() | |

| } |

OBU extension header syntax

| obu_extension_header() { | Type |

| temporal_id | f(3) |

| spatial_id | f(2) |

| extension_header_reserved_3bits | f(3) |

| } |

Trailing bits syntax

| trailing_bits( nbBits ) { | Type |

| trailing_one_bit | f(1) |

| nbBits-- | |

| while ( nbBits > 0 ) { | |

| trailing_zero_bit | f(1) |

| nbBits-- | |

| } | |

| } |

Byte alignment syntax

| byte_alignment( ) { | Type |

| while ( get_position( ) & 7 ) | |

| zero_bit | f(1) |

| } |

Reserved OBU syntax

| reserved_obu( ) { | Type |

| } |

Note: Reserved OBUs do not have a defined syntax. The obu_type reserved values are reserved for future use. Decoders should ignore the entire OBU if they do not understand the obu_type. Ignoring the OBU can be done based on obu_size. The last byte of the valid content of the payload data for this OBU type is considered to be the last byte that is not equal to zero. This rule is to prevent the dropping of valid bytes by systems that interpret trailing zero bytes as a continuation of the trailing bits in an OBU. This implies that when any payload data is present for this OBU type, at least one byte of the payload data (including the trailing bit) shall not be equal to 0.

Sequence header OBU syntax

General sequence header OBU syntax

| sequence_header_obu( ) { | Type |

| seq_profile | f(3) |

| still_picture | f(1) |

| reduced_still_picture_header | f(1) |

| if ( reduced_still_picture_header ) { | |

| timing_info_present_flag = 0 | |

| decoder_model_info_present_flag = 0 | |

| initial_display_delay_present_flag = 0 | |

| operating_points_cnt_minus_1 = 0 | |

| operating_point_idc[ 0 ] = 0 | |

| seq_level_idx[ 0 ] | f(5) |

| seq_tier[ 0 ] = 0 | |

| decoder_model_present_for_this_op[ 0 ] = 0 | |

| initial_display_delay_present_for_this_op[ 0 ] = 0 | |

| } else { | |

| timing_info_present_flag | f(1) |

| if ( timing_info_present_flag ) { | |

| timing_info( ) | |

| decoder_model_info_present_flag | f(1) |

| if ( decoder_model_info_present_flag ) { | |

| decoder_model_info( ) | |

| } | |

| } else { | |

| decoder_model_info_present_flag = 0 | |

| } | |

| initial_display_delay_present_flag | f(1) |

| operating_points_cnt_minus_1 | f(5) |

| for ( i = 0; i <= operating_points_cnt_minus_1; i++ ) { | |

| operating_point_idc[ i ] | f(12) |

| seq_level_idx[ i ] | f(5) |

| if ( seq_level_idx[ i ] > 7 ) { | |

| seq_tier[ i ] | f(1) |

| } else { | |

| seq_tier[ i ] = 0 | |

| } | |

| if ( decoder_model_info_present_flag ) { | |

| decoder_model_present_for_this_op[ i ] | f(1) |

| if ( decoder_model_present_for_this_op[ i ] ) { | |

| operating_parameters_info( i ) | |

| } | |

| } else { | |

| decoder_model_present_for_this_op[ i ] = 0 | |

| } | |

| if ( initial_display_delay_present_flag ) { | |

| initial_display_delay_present_for_this_op[ i ] | f(1) |

| if ( initial_display_delay_present_for_this_op[ i ] ) { | |

| initial_display_delay_minus_1[ i ] | f(4) |

| } | |

| } | |

| } | |

| } | |

| operatingPoint = choose_operating_point( ) | |

| OperatingPointIdc = operating_point_idc[ operatingPoint ] | |

| frame_width_bits_minus_1 | f(4) |

| frame_height_bits_minus_1 | f(4) |

| n = frame_width_bits_minus_1 + 1 | |

| max_frame_width_minus_1 | f(n) |

| n = frame_height_bits_minus_1 + 1 | |

| max_frame_height_minus_1 | f(n) |

| if ( reduced_still_picture_header ) | |

| frame_id_numbers_present_flag = 0 | |

| else | |

| frame_id_numbers_present_flag | f(1) |

| if ( frame_id_numbers_present_flag ) { | |

| delta_frame_id_length_minus_2 | f(4) |

| additional_frame_id_length_minus_1 | f(3) |

| } | |

| use_128x128_superblock | f(1) |

| enable_filter_intra | f(1) |

| enable_intra_edge_filter | f(1) |

| if ( reduced_still_picture_header ) { | |

| enable_interintra_compound = 0 | |

| enable_masked_compound = 0 | |

| enable_warped_motion = 0 | |

| enable_dual_filter = 0 | |

| enable_order_hint = 0 | |

| enable_jnt_comp = 0 | |

| enable_ref_frame_mvs = 0 | |

| seq_force_screen_content_tools = SELECT_SCREEN_CONTENT_TOOLS | |

| seq_force_integer_mv = SELECT_INTEGER_MV | |

| OrderHintBits = 0 | |

| } else { | |

| enable_interintra_compound | f(1) |

| enable_masked_compound | f(1) |

| enable_warped_motion | f(1) |

| enable_dual_filter | f(1) |

| enable_order_hint | f(1) |

| if ( enable_order_hint ) { | |

| enable_jnt_comp | f(1) |

| enable_ref_frame_mvs | f(1) |

| } else { | |

| enable_jnt_comp = 0 | |

| enable_ref_frame_mvs = 0 | |

| } | |

| seq_choose_screen_content_tools | f(1) |

| if ( seq_choose_screen_content_tools ) { | |

| seq_force_screen_content_tools = SELECT_SCREEN_CONTENT_TOOLS | |

| } else { | |

| seq_force_screen_content_tools | f(1) |

| } | |

| if ( seq_force_screen_content_tools > 0 ) { | |

| seq_choose_integer_mv | f(1) |

| if ( seq_choose_integer_mv ) { | |

| seq_force_integer_mv = SELECT_INTEGER_MV | |

| } else { | |

| seq_force_integer_mv | f(1) |

| } | |

| } else { | |

| seq_force_integer_mv = SELECT_INTEGER_MV | |

| } | |

| if ( enable_order_hint ) { | |

| order_hint_bits_minus_1 | f(3) |

| OrderHintBits = order_hint_bits_minus_1 + 1 | |

| } else { | |

| OrderHintBits = 0 | |

| } | |

| } | |

| enable_superres | f(1) |

| enable_cdef | f(1) |

| enable_restoration | f(1) |

| color_config( ) | |

| film_grain_params_present | f(1) |

| } |

Color config syntax

| color_config( ) { | Type |

| high_bitdepth | f(1) |

| if ( seq_profile == 2 && high_bitdepth ) { | |

| twelve_bit | f(1) |

| BitDepth = twelve_bit ? 12 : 10 | |

| } else if ( seq_profile <= 2 ) { | |

| BitDepth = high_bitdepth ? 10 : 8 | |

| } | |

| if ( seq_profile == 1 ) { | |

| mono_chrome = 0 | |

| } else { | |

| mono_chrome | f(1) |

| } | |

| NumPlanes = mono_chrome ? 1 : 3 | |

| color_description_present_flag | f(1) |

| if ( color_description_present_flag ) { | |

| color_primaries | f(8) |

| transfer_characteristics | f(8) |

| matrix_coefficients | f(8) |

| } else { | |

| color_primaries = CP_UNSPECIFIED | |

| transfer_characteristics = TC_UNSPECIFIED | |

| matrix_coefficients = MC_UNSPECIFIED | |

| } | |

| if ( mono_chrome ) { | |

| color_range | f(1) |

| subsampling_x = 1 | |

| subsampling_y = 1 | |

| chroma_sample_position = CSP_UNKNOWN | |

| separate_uv_delta_q = 0 | |

| return | |

| } else if ( color_primaries == CP_BT_709 && | |

| transfer_characteristics == TC_SRGB && | |

| matrix_coefficients == MC_IDENTITY ) { | |

| color_range = 1 | |

| subsampling_x = 0 | |

| subsampling_y = 0 | |

| } else { | |

| color_range | f(1) |

| if ( seq_profile == 0 ) { | |

| subsampling_x = 1 | |

| subsampling_y = 1 | |

| } else if ( seq_profile == 1 ) { | |

| subsampling_x = 0 | |

| subsampling_y = 0 | |

| } else { | |

| if ( BitDepth == 12 ) { | |

| subsampling_x | f(1) |

| if ( subsampling_x ) | |

| subsampling_y | f(1) |

| else | |

| subsampling_y = 0 | |

| } else { | |

| subsampling_x = 1 | |

| subsampling_y = 0 | |

| } | |

| } | |

| if ( subsampling_x && subsampling_y ) { | |

| chroma_sample_position | f(2) |

| } | |

| } | |

| separate_uv_delta_q | f(1) |

| } |

Timing info syntax

| timing_info( ) { | Type |

| num_units_in_display_tick | f(32) |

| time_scale | f(32) |

| equal_picture_interval | f(1) |

| if ( equal_picture_interval ) | |

| num_ticks_per_picture_minus_1 | uvlc() |

| } |

Decoder model info syntax

| decoder_model_info( ) { | Type |

| buffer_delay_length_minus_1 | f(5) |

| num_units_in_decoding_tick | f(32) |

| buffer_removal_time_length_minus_1 | f(5) |

| frame_presentation_time_length_minus_1 | f(5) |

| } |

Operating parameters info syntax

| operating_parameters_info( op ) { | Type |

| n = buffer_delay_length_minus_1 + 1 | |

| decoder_buffer_delay[ op ] | f(n) |

| encoder_buffer_delay[ op ] | f(n) |

| low_delay_mode_flag[ op ] | f(1) |

| } |

Temporal delimiter obu syntax

| temporal_delimiter_obu( ) { | Type |

| SeenFrameHeader = 0 | |

| } |

Note: The temporal delimiter has an empty payload.

Padding OBU syntax

| padding_obu( ) { | Type |

| for ( i = 0; i < obu_padding_length; i++ ) | |

| obu_padding_byte | f(8) |

| } |

Note: obu_padding_length is not coded in the bitstream but can be computed based on obu_size minus the number of trailing bytes. In practice, though, since this is padding data meant to be skipped, decoders do not need to determine either that length nor the number of trailing bytes. They can ignore the entire OBU. Ignoring the OBU can be done based on obu_size. The last byte of the valid content of the payload data for this OBU type is considered to be the last byte that is not equal to zero. This rule is to prevent the dropping of valid bytes by systems that interpret trailing zero bytes as a continuation of the trailing bits in an OBU. This implies that when any payload data is present for this OBU type, at least one byte of the payload data (including the trailing bit) shall not be equal to 0.

Metadata OBU syntax

General metadata OBU syntax

| metadata_obu( ) { | Type |

| metadata_type | leb128() |

| if ( metadata_type == METADATA_TYPE_ITUT_T35 ) | |

| metadata_itut_t35( ) | |

| else if ( metadata_type == METADATA_TYPE_HDR_CLL ) | |

| metadata_hdr_cll( ) | |

| else if ( metadata_type == METADATA_TYPE_HDR_MDCV ) | |

| metadata_hdr_mdcv( ) | |

| else if ( metadata_type == METADATA_TYPE_SCALABILITY ) | |

| metadata_scalability( ) | |

| else if ( metadata_type == METADATA_TYPE_TIMECODE ) | |

| metadata_timecode( ) | |

| } |

Note: The exact syntax of metadata_obu is not defined in this specification when metadata_type is equal to a value reserved for future use or a user private value. Decoders should ignore the entire OBU if they do not understand the metadata_type. The last byte of the valid content of the data is considered to be the last byte that is not equal to zero. This rule is to prevent the dropping of valid bytes by systems that interpret trailing zero bytes as a padding continuation of the trailing bits in an OBU. This implies that when any payload data is present for this OBU type, at least one byte of the payload data (including the trailing bit) shall not be equal to 0.

Metadata ITUT T35 syntax

| metadata_itut_t35( ) { | Type |

| itu_t_t35_country_code | f(8) |

| if ( itu_t_t35_country_code == 0xFF ) { | |

| itu_t_t35_country_code_extension_byte | f(8) |

| } | |

| itu_t_t35_payload_bytes | |

| } |

Note: The exact syntax of itu_t_t35_payload_bytes is not defined in this specification. External specifications can define the syntax. Decoders should ignore the entire OBU if they do not understand it. The last byte of the valid content of the data is considered to be the last byte that is not equal to zero. This rule is to prevent the dropping of valid bytes by systems that interpret trailing zero bytes as a padding continuation of the trailing bits in an OBU. This implies that when any payload data is present for this OBU type, at least one byte of the payload data (including the trailing bit) shall not be equal to 0.

Metadata high dynamic range content light level syntax

| metadata_hdr_cll( ) { | Type |

| max_cll | f(16) |

| max_fall | f(16) |

| } |

Metadata high dynamic range mastering display color volume syntax

| metadata_hdr_mdcv( ) { | Type |

| for ( i = 0; i < 3; i++ ) { | |

| primary_chromaticity_x[ i ] | f(16) |

| primary_chromaticity_y[ i ] | f(16) |

| } | |

| white_point_chromaticity_x | f(16) |

| white_point_chromaticity_y | f(16) |

| luminance_max | f(32) |

| luminance_min | f(32) |

| } |

Metadata scalability syntax

| metadata_scalability( ) { | Type |

| scalability_mode_idc | f(8) |

| if ( scalability_mode_idc == SCALABILITY_SS ) | |

| scalability_structure( ) | |

| } |

Scalability structure syntax

| scalability_structure( ) { | Type |

| spatial_layers_cnt_minus_1 | f(2) |

| spatial_layer_dimensions_present_flag | f(1) |

| spatial_layer_description_present_flag | f(1) |

| temporal_group_description_present_flag | f(1) |

| scalability_structure_reserved_3bits | f(3) |

| if ( spatial_layer_dimensions_present_flag ) { | |

| for ( i = 0; i <= spatial_layers_cnt_minus_1 ; i++ ) { | |

| spatial_layer_max_width[ i ] | f(16) |

| spatial_layer_max_height[ i ] | f(16) |

| } | |

| } | |

| if ( spatial_layer_description_present_flag ) { | |

| for ( i = 0; i <= spatial_layers_cnt_minus_1; i++ ) | |

| spatial_layer_ref_id[ i ] | f(8) |

| } | |

| if ( temporal_group_description_present_flag ) { | |

| temporal_group_size | f(8) |

| for ( i = 0; i < temporal_group_size; i++ ) { | |

| temporal_group_temporal_id[ i ] | f(3) |

| temporal_group_temporal_switching_up_point_flag[ i ] | f(1) |

| temporal_group_spatial_switching_up_point_flag[ i ] | f(1) |

| temporal_group_ref_cnt[ i ] | f(3) |

| for ( j = 0; j < temporal_group_ref_cnt[ i ]; j++ ) { | |

| temporal_group_ref_pic_diff[ i ][ j ] | f(8) |

| } | |

| } | |

| } | |

| } |

Metadata timecode syntax

| metadata_timecode( ) { | Type |

| counting_type | f(5) |

| full_timestamp_flag | f(1) |

| discontinuity_flag | f(1) |

| cnt_dropped_flag | f(1) |

| n_frames | f(9) |

| if ( full_timestamp_flag ) { | |

| seconds_value | f(6) |

| minutes_value | f(6) |

| hours_value | f(5) |

| } else { | |

| seconds_flag | f(1) |

| if ( seconds_flag ) { | |

| seconds_value | f(6) |

| minutes_flag | f(1) |

| if ( minutes_flag ) { | |

| minutes_value | f(6) |

| hours_flag | f(1) |

| if ( hours_flag ) { | |

| hours_value | f(5) |

| } | |

| } | |

| } | |

| } | |

| time_offset_length | f(5) |

| if ( time_offset_length > 0 ) { | |

| time_offset_value | f(time_offset_length) |

| } | |

| } |

Frame header OBU syntax

General frame header OBU syntax

| frame_header_obu( ) { | Type |

| if ( SeenFrameHeader == 1 ) { | |

| frame_header_copy() | |

| } else { | |

| SeenFrameHeader = 1 | |

| uncompressed_header( ) | |

| if ( show_existing_frame ) { | |

| decode_frame_wrapup( ) | |

| SeenFrameHeader = 0 | |

| } else { | |

| TileNum = 0 | |

| SeenFrameHeader = 1 | |

| } | |

| } | |

| } |

Uncompressed header syntax

| uncompressed_header( ) { | Type |

| if ( frame_id_numbers_present_flag ) { | |

| idLen = ( additional_frame_id_length_minus_1 + | |

| delta_frame_id_length_minus_2 + 3 ) | |

| } | |

| allFrames = (1 << NUM_REF_FRAMES) - 1 | |

| if ( reduced_still_picture_header ) { | |

| show_existing_frame = 0 | |

| frame_type = KEY_FRAME | |

| FrameIsIntra = 1 | |

| show_frame = 1 | |

| showable_frame = 0 | |

| } else { | |

| show_existing_frame | f(1) |

| if ( show_existing_frame == 1 ) { | |

| frame_to_show_map_idx | f(3) |

| if ( decoder_model_info_present_flag && !equal_picture_interval ) { | |

| temporal_point_info( ) | |

| } | |

| refresh_frame_flags = 0 | |

| if ( frame_id_numbers_present_flag ) { | |

| display_frame_id | f(idLen) |

| } | |

| frame_type = RefFrameType[ frame_to_show_map_idx ] | |

| if ( frame_type == KEY_FRAME ) { | |

| refresh_frame_flags = allFrames | |

| } | |

| if ( film_grain_params_present ) { | |

| load_grain_params( frame_to_show_map_idx ) | |

| } | |

| return | |

| } | |

| frame_type | f(2) |

| FrameIsIntra = (frame_type == INTRA_ONLY_FRAME || | |

| frame_type == KEY_FRAME) | |

| show_frame | f(1) |

| if ( show_frame && decoder_model_info_present_flag && !equal_picture_interval ) { | |

| temporal_point_info( ) | |

| } | |

| if ( show_frame ) { | |

| showable_frame = frame_type != KEY_FRAME | |

| } else { | |

| showable_frame | f(1) |

| } | |

| if ( frame_type == SWITCH_FRAME || | |

| ( frame_type == KEY_FRAME && show_frame ) ) | |

| error_resilient_mode = 1 | |

| else | |

| error_resilient_mode | f(1) |

| } | |

| if ( frame_type == KEY_FRAME && show_frame ) { | |

| for ( i = 0; i < NUM_REF_FRAMES; i++ ) { | |

| RefValid[ i ] = 0 | |

| RefOrderHint[ i ] = 0 | |

| } | |

| for ( i = 0; i < REFS_PER_FRAME; i++ ) { | |

| OrderHints[ LAST_FRAME + i ] = 0 | |

| } | |

| } | |

| disable_cdf_update | f(1) |

| if ( seq_force_screen_content_tools == SELECT_SCREEN_CONTENT_TOOLS ) { | |

| allow_screen_content_tools | f(1) |

| } else { | |

| allow_screen_content_tools = seq_force_screen_content_tools | |

| } | |

| if ( allow_screen_content_tools ) { | |

| if ( seq_force_integer_mv == SELECT_INTEGER_MV ) { | |

| force_integer_mv | f(1) |

| } else { | |

| force_integer_mv = seq_force_integer_mv | |

| } | |

| } else { | |

| force_integer_mv = 0 | |

| } | |

| if ( FrameIsIntra ) { | |

| force_integer_mv = 1 | |

| } | |

| if ( frame_id_numbers_present_flag ) { | |

| PrevFrameID = current_frame_id | |

| current_frame_id | f(idLen) |

| mark_ref_frames( idLen ) | |

| } else { | |

| current_frame_id = 0 | |

| } | |

| if ( frame_type == SWITCH_FRAME ) | |

| frame_size_override_flag = 1 | |

| else if ( reduced_still_picture_header ) | |

| frame_size_override_flag = 0 | |

| else | |

| frame_size_override_flag | f(1) |

| order_hint | f(OrderHintBits) |

| OrderHint = order_hint | |

| if ( FrameIsIntra || error_resilient_mode ) { | |

| primary_ref_frame = PRIMARY_REF_NONE | |

| } else { | |

| primary_ref_frame | f(3) |

| } | |

| if ( decoder_model_info_present_flag ) { | |

| buffer_removal_time_present_flag | f(1) |

| if ( buffer_removal_time_present_flag ) { | |

| for ( opNum = 0; opNum <= operating_points_cnt_minus_1; opNum++ ) { | |

| if ( decoder_model_present_for_this_op[ opNum ] ) { | |

| opPtIdc = operating_point_idc[ opNum ] | |

| inTemporalLayer = ( opPtIdc >> temporal_id ) & 1 | |